$1M Lawsuit Hits Tesla After Cybertruck’s ‘Autopilot’ Drives Straight Into Highway Barrier

A Houston woman filed a $1 million lawsuit against Tesla after her Cybertruck, allegedly operating on Autopilot, crashed into a fixed barrier on I-69. This was not a multi-car pileup or a complicated intersection. It was a concrete barrier, sitting exactly where it always sits. The complaint centers on a simple allegation: with Full Self-Driving (often marketed alongside Autopilot) engaged, the vehicle didn’t avoid a stationary object on a major freeway. And roughly 2 million recalled Teslas suggest this story stretches far beyond Houston.

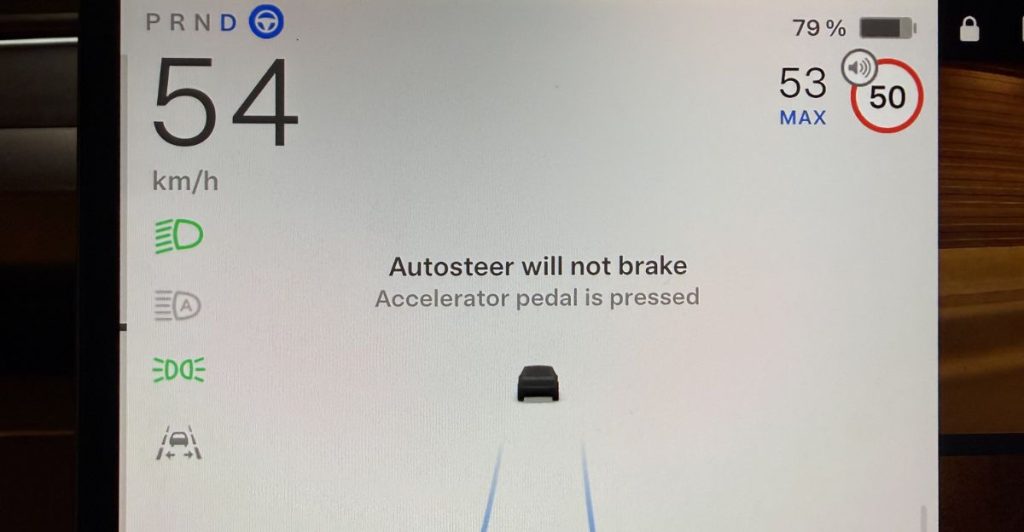

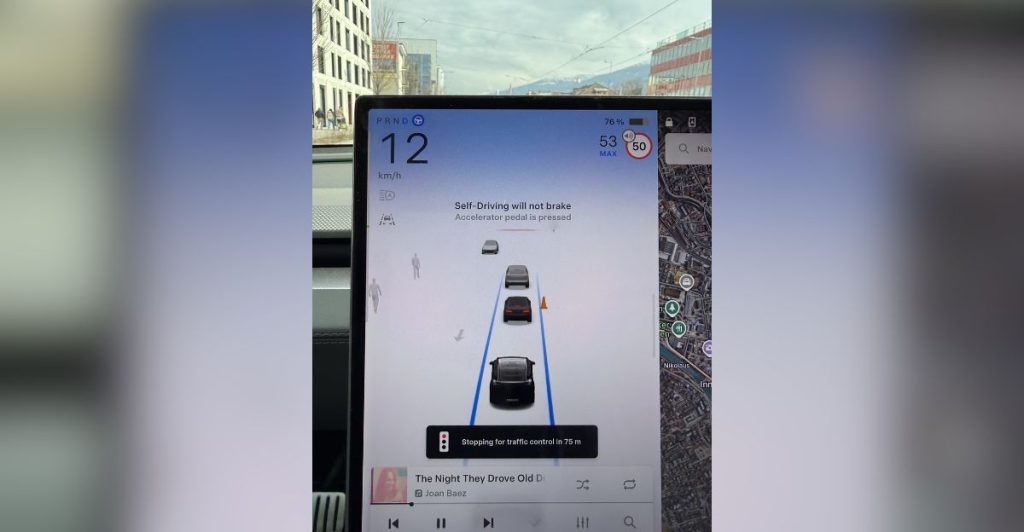

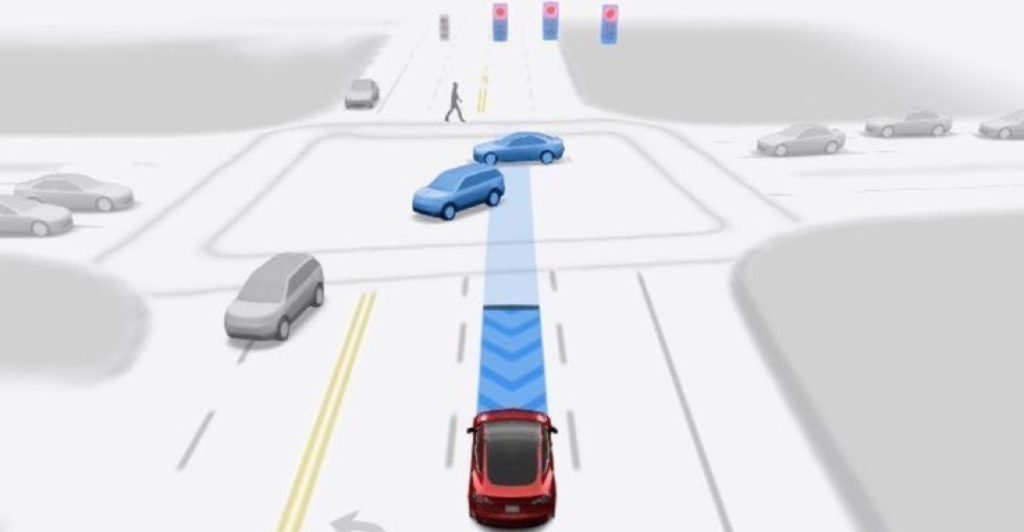

The Blind Spot

The crash fits a pattern federal regulators have tracked for years. NHTSA opened an investigation into Tesla Autopilot crashes involving stationary objects and emergency vehicles, then escalated it to a formal Engineering Analysis. The core concern: the system struggles with fixed hazards when drivers disengage. Tesla’s own manual states Autopilot requires active supervision and does not make the vehicle autonomous. The technology steers, brakes, and accelerates. But when a concrete wall appears, the system expects a human to take over. That handoff is where everything breaks.

Your Commute

For anyone sharing a highway with Teslas on Autopilot, this isn’t abstract. A barrier strike on I-69 means the system allegedly failed to detect or react to a fixed object at freeway speed. Construction zones, jersey walls, highway medians. Every commuter passes dozens of them daily. The lawsuit alleges the vehicle’s safety tech became the cause of the crash, not the prevention. That flips the basic promise of driver assistance on its head. And every driver near a Tesla running Autopilot now carries that question with them.

Tesla’s Defense

Tesla will almost certainly contest causation and point to its supervision requirements. The owner’s manual is explicit: drivers must keep their hands on the wheel and stay attentive at all times. That language exists for exactly this legal scenario. But Consumer Reports has argued Tesla’s monitoring system and the “Autopilot” branding itself can enable the very inattention the fine print disclaims. A feature named like aviation automation, paired with monitoring critics call insufficient, creates a gap between what drivers expect and what the manual demands.

Courtroom Cascade

Here’s where the scope jumps. A federal judge not only allowed a separate Autopilot lawsuit over a fatal crash to proceed rather than dismissing it at an early stage, but a jury later returned a $243 million verdict against Tesla, and the judge upheld that verdict in February 2026. That outcome did more than keep Tesla’s legal exposure alive at the motion stage; it showed a jury was willing to assign substantial liability. Plaintiffs’ attorneys nationwide noticed. Each case that survives early dismissal or reaches a plaintiff-friendly verdict builds a template for the next one. The Houston Cybertruck claim doesn’t exist in isolation. It joins a growing docket where courts are allowing juries to weigh whether “supervised automation” is a product defense or a marketing contradiction.

The Real Product

Branding. Monitoring. Blame allocation. Those three gears form the machine behind every one of these cases. Tesla calls the feature “Autopilot.” The manual says to supervise constantly. Regulators say the monitoring design can enable misuse. One gear sells the dream. Another disclaims it. The third determines who pays when reality intervenes. A barrier on I-69. A fatal crash in Florida. Two million recalled vehicles. Same three gears, turning the same way every time. The steering tech is secondary. Attention enforcement is the actual product, and it keeps failing the same test.

Human Cost

No verbatim quote captures the plaintiff’s experience, but the allegation speaks loudly enough. A driver trusted a system called “Autopilot,” and that trust ended at a concrete barrier on a Houston freeway. NHTSA’s investigation specifically flagged driver engagement failures in crashes involving stationary hazards. That phrase, “driver engagement failure,” sanitizes something visceral: a person behind the wheel of a massive truck, relying on software, watching a wall arrive. The fear this activates is personal. Anyone who has ever glanced away from the road for two seconds understands it.

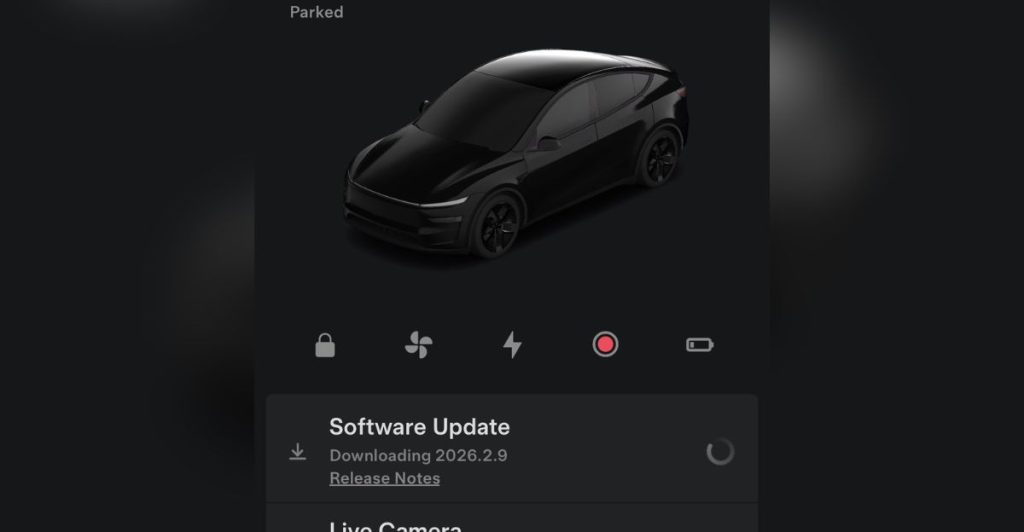

New Rules

NHTSA forced Tesla to recall approximately 2 million vehicles to add Autopilot safeguards. That recall is among the largest Tesla recalls by vehicle count. It signals regulators’ view that driver-engagement failures are not isolated incidents but a systemic defect requiring a fleet-wide fix. The investigation escalation to Engineering Analysis, the recall, and the surviving lawsuits together establish a new norm: semi-autonomous features carry product-liability weight. Automakers can no longer hide behind “the driver should have been watching” when regulators say the design invited the distraction.

Winners and Losers

Plaintiffs’ attorneys win leverage every time a case survives dismissal and every time a recall validates their theory. Insurers lose, because Autopilot-involved claims complicate fault models and push premiums higher. Tesla loses twice: recall compliance costs money, and each lawsuit forces discovery into vehicle logs, driver alerts, and engagement data. The biggest losers are consumers who trusted the branding without reading the manual. The biggest irony: safety technology designed to reduce crashes now generates a self-reinforcing legal cycle. More crashes feed more investigations, which feed more plaintiff leverage.

Still Breaking

The cascade continues. Automakers across the industry are tightening monitoring systems, geofencing features, and increasing on-screen warnings, all because this blame-allocation machine keeps producing the same result. Tesla’s next move will likely involve stricter driver-attention enforcement, which quietly admits the old system wasn’t strict enough. Meanwhile, the Houston lawsuit enters discovery, where vehicle logs could reveal exactly what happened in the seconds before impact. One Cybertruck. One barrier. And a ripple that now touches regulators, courtrooms, insurers, and every driver sharing the road with supervised automation.

Sources:

“Houston woman sues Tesla after Cybertruck Autopilot crash.” Houston Chronicle, 9 Mar 2026.

“Tesla recalls nearly all 2 million of its vehicles on US roads to limit Autopilot.” CNN, 13 Dec 2023.

“Autopilot & First Responder Scenes – Upgrade of PE21-020 to Engineering Analysis EA22-002.” National Highway Traffic Safety Administration (NHTSA) ODI Document, 7 Jun 2022.