Tesla’s ‘Full Self-Driving’ Marketing Found Deceptive After 467 Crashes—$243M Verdict Tests 50 Years Of Driver-Liability Law

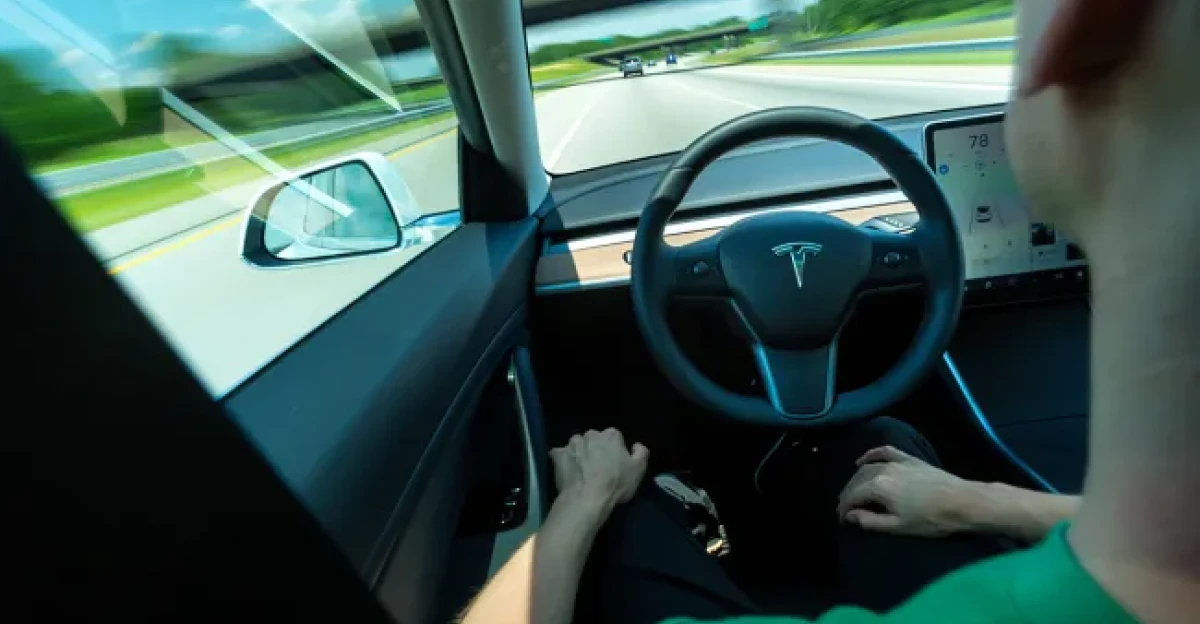

Tesla built its reputation on ambitious promises about automated driving. One phrase carried unusual weight: “Full Self-Driving.” The name suggested a future where a car could handle much of the road without constant human control. Safety investigators later linked hundreds of crashes to Tesla driver assistance systems. A federal jury verdict of $243 million forced a new legal debate about responsibility when automated features fail during real driving. The ruling now shapes how courts, insurers, and regulators examine automation claims. The chain of events begins with the name itself.

The Name That Promised Autonomy

A product called Full Self-Driving reached millions of drivers who read those words and assumed extensive automation. Tesla marketing once described a future where a driver could enter a destination and allow the car to complete the trip. That message remained online as the National Highway Traffic Safety Administration compiled records tied to Tesla’s Autopilot system. Investigators documented 467 crashes in which Autopilot was reported as engaged. Thirteen fatal crashes appeared within that record. Federal reports described incidents involving traffic signals, construction zones, and a 2016 collision with a tractor trailer under bright sky conditions.

Early Crashes Draw Federal Attention

The first widely reported Autopilot fatality occurred on May 7, 2016, in Williston, Florida. Tesla stated, “neither Autopilot nor the driver noticed the white side of the tractor-trailer against a brightly lit sky, so the brake was not applied.” Federal investigators examined how the system interpreted the scene. Another fatal crash followed on March 1, 2019, in Delray Beach, Florida. A Tesla Model S with Autopilot engaged struck a tractor trailer at highway speed. Investigators reported no braking before impact. These incidents brought closer scrutiny from regulators and courts.

Regulators Challenge The Marketing

Regulatory pressure increased several years later. On July 28, 2022, the California Department of Motor Vehicles filed accusations that Tesla misled consumers about automation capabilities. An administrative law judge reviewed the case and concluded that vehicles equipped with Autopilot and marketed with “Full Self-Driving Capability” “could not, at the time of those advertisements, and cannot now, operate as autonomous vehicles.” The ruling recommended suspending Tesla’s dealer license within 30 days. Tesla later adjusted parts of its marketing language. That decision placed formal scrutiny on how automated features were described to consumers.

A Jury Assigns Shared Responsibility

A federal jury in Miami examined a fatal crash involving Tesla driver assistance technology. Jurors concluded that both driver behavior and system design contributed to the outcome. The verdict assigned Tesla 33% liability for the crash. Damages totaled $43 million in compensatory payments and $200 million in punitive penalties. The combined judgment reached $243 million. A judge later upheld the full award. Courts had long treated drivers as the primary party responsible for collisions. The Miami verdict added a new interpretation of product liability for automated systems.

How Liability Law Expanded

Product liability law has long allowed lawsuits against manufacturers when defective design contributes to harm. Vehicle crashes often centered on driver responsibility. Automated driver assistance introduced a different situation. Tesla vehicles using Level 2 systems still require constant driver supervision. Marketing language suggested greater independence from the driver. Lawyers argued that the difference between classification and marketing encouraged foreseeable misuse. Jurors accepted that argument in the Miami case. The verdict applied established product liability doctrine to software controlled vehicle systems operating on public roads.

Automation Adoption Continues Growing

Driver assistance technology continues expanding across the American vehicle market. Automakers install Level 2 systems that assist with steering, braking, and lane control. Industry forecasts expect tens of millions of vehicles with advanced assistance features to operate on United States roads by the mid 2030s. These systems remain legally classified as driver assistance. Drivers retain responsibility for monitoring the vehicle. Court rulings now influence how manufacturers describe capabilities and limitations. The growing presence of automated features increased the stakes for insurers evaluating crash risk.

Insurance Industry Responds Quickly

Insurers began responding to automated driving data during 2026. Lemonade introduced an insurance product for Tesla drivers in Arizona on January 26, 2026. The program offered approximately 50% lower per mile premiums for drivers using Full Self-Driving features. Pricing relied on telemetry from the vehicle rather than traditional risk models alone. A Lemonade co founder said, “The safer FSD software becomes, the more our prices will drop.” Insurers gained access to operational data previously controlled by automakers. That information allowed companies to adjust risk models faster than legislation.

China Uses Regulatory Policy

China approached automated driving through regulatory policy. National standards addressing Level 3 automated driving clarified when manufacturers can bear legal responsibility if automation operates within approved parameters. Authorities also began phasing in data recorders designed to capture system performance during crashes. Insurance requirements for automated vehicle operations increased as the technology expanded. At the same time, pilot programs for Level 4 logistics vehicles launched across multiple Chinese cities. These programs allow regulators to monitor automated systems in commercial service before wider deployment.

Automakers Consider Higher Automation

Legal exposure surrounding Level 2 and Level 3 automation has influenced development strategies. Some companies consider accelerating work toward Level 4 systems that remove human supervision during defined operations. Waymo robotaxi data provided one widely cited comparison. Insurance analysis from Swiss Re reported 88% fewer property damage claims and 92% fewer bodily injury claims compared with human driven vehicles. Legislators in the United States also began debating national automated vehicle rules. Versions of the SELF DRIVE Act entered congressional discussion during early 2026.

Two Liability Systems Now Operate

Automated driving technology now exists within two overlapping legal interpretations. Traditional vehicle law places primary responsibility on the driver. Product liability rulings allow courts to assign responsibility to manufacturers when system design or marketing contributes to misuse. The $243 million Miami verdict strengthened that interpretation. Attorneys representing plaintiffs in multiple Autopilot and Full Self Driving lawsuits began citing the case during negotiations and pretrial filings. Each new case may refine how responsibility is divided between drivers and technology companies in automated vehicles.

Sources:

Tesla Must Pay $243 Million Judgement Over Fatal 2019 Autopilot Crash. Singleton Schreiber, 19 February 2026

DMV Finds Tesla Violated California State Law. California Department of Motor Vehicles, 16 December 2025

California judge says Tesla engaged in deceptive Autopilot marketing. CNBC, 16 December 2025

Tesla Autopilot investigation closed after feds find 13 fatal crashes. TechCrunch, 25 April 2024

Lemonade Unveils Autonomous Car Insurance, Slashing Rates for Tesla FSD. Business Wire, 20 January 2026

Waymo still doing better than humans at preventing injuries and property damage. The Verge, 19 December 2024